Victor de Lafuente, Mehrdad Jazayeri, and Michael N. Shadlen. Representation of Accumulating Evidence for a Decision in Two Parietal Areas. Journal of Neuroscience, 2015 35 (10) 4306-4318

by Farzaneh Olianeghad (Department of Electrical Engineering, Shahid Rajaee Teacher Training University, Tehran, Iran)

A key question that has amused philosophers, psychologists and neuroscientists for a long time: we execute decisions by actions. Actions are movements that we do with our hands, feet, or even our eyes. Indeed, speech is also a form of movement. (Some believe that Abstract Thought is also a form of navigation; Immanuel Kant comes to mind). Different parts of brain control these different movements. Does this mean that the DECISION is also similarly DISTRIBUTED? Isn’t it the case that one single common decision maker (the thing we intuitively call ME) controls all of these diverse actions? Or is it the case that each movement have its own dedicated decision process too? Would that mean the brain is like a kingdom ruled by many kings?

Psychologists have a favorite model for how we make simple decisions: when deliberating between two alternatives (Figure 1), our brain continuously samples the evidence informing about the state of the world and accumulates the samples over time. When the accumulated evidence reaches a convincing level, the decision is made. Many studies in the monkey brain have identified the neural representation of such evolving decision variable. Since decisions can be conveyed through different actions, experiments that used different movements (e.g. hand or eye movement) found that different brain areas engaged in evidence accumulation. But each of those previous studies only studied one type of movement.

Lafuente and colleagues compared evidence accumulation when action was performed by eyes (gaze direction – figure 1 top) or hand (reach movement – figure 1 bottom). They measured the activity of two brain areas (MIP and LIP) of the rhesus monkey brain when the monkey decided (sometimes using hands and sometimes using eye gaze) if the displayed movie was moving to the left or right.

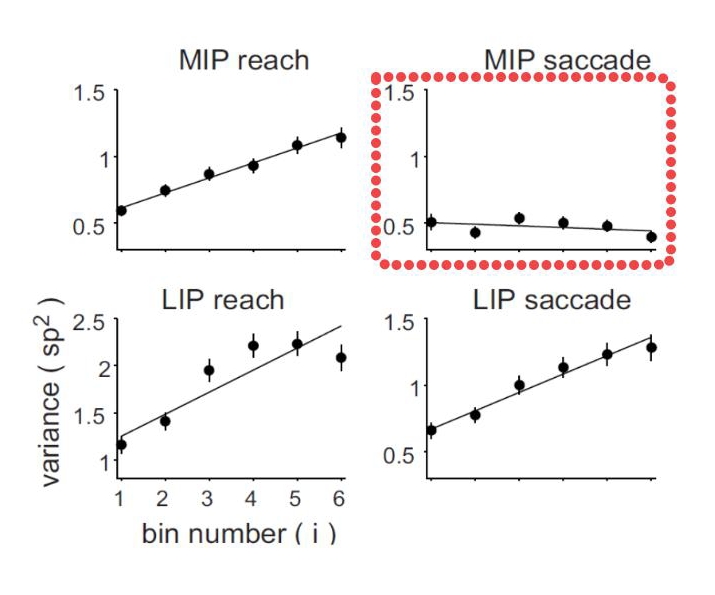

The result was very complicated: some parts of the data confirmed the less intuitive, parallel (many kings) hypothesis. When monkeys acted with eye gaze, neural activity in eye gaze area (LIP) was indicative of choice but the brain area coding for hand position (MIP) was not. This suggested that LIP has its own decision making system that is not shared by MIP.

On the other hand, when monkeys acted by reaching, both brain areas (LIP and MIP) were indicative of choice. This supported the simpler, shared decision maker (unified ME) hypothesis. MIP neurons were modality specific. LIP neurons were modality general. The search for unified ME failed to produce a decisive answer. Empirical science is often like that. Some questions are hard.

Recent Comments